Healthcare effects and evidence robustness of reimbursable digital health applications in Germany: a systematic review

The objective of this systematic review of DiGA approval studies was two-fold: first, to give an overview of the study characteristics, measurement instruments, outcomes, and positive healthcare effects relating to these tools; and, second, to assess the RoB of studies submitted by manufacturers to the BfArM for permanent approval for their DiGAs.

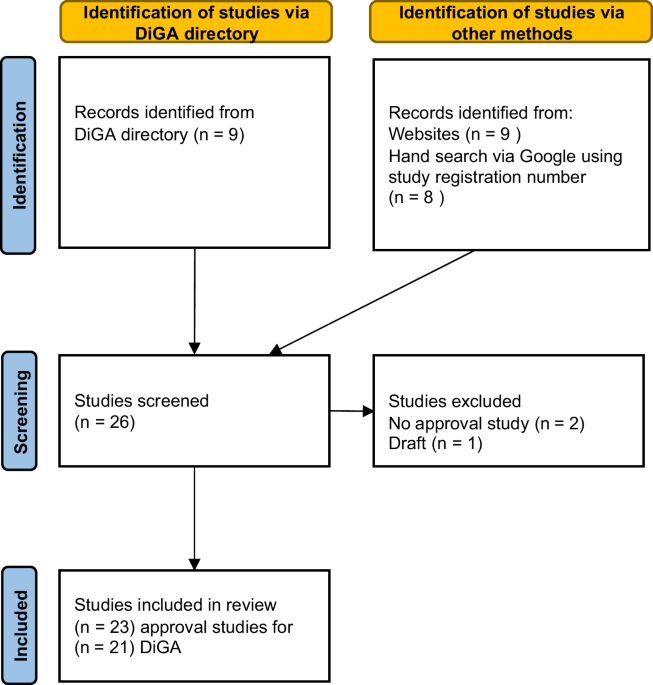

In total, 23 approval studies for 21 DiGAs published as of March 15, 2024, were included in this review.

The results revealed substantial differences among the studies. This was first of all evident with regard to intervention design and study characteristics, including sample sizes, drop-out rates, measurement times, and intervention durations. These variations between interventions reflected the flexibility granted to manufacturers in designing interventions within the German DiGA approval process. Another source of variability between interventions was in the choice and measurement of primary, secondary, and additional outcomes. Despite this, an overview of the outcomes revealed that all approval studies reported statistically significant and predominantly medium to large between-group effects for their primary outcomes. In contrast, the results for secondary outcomes were more variable.

The RoB showed that the overall RoB was high for all approval studies, albeit with variation across different domains.

Thus, the main conclusions of this systematic review are that DiGA approval studies are difficult to compare and that evidence provided for the positive healthcare effects of DiGAs should be critically evaluated, as results are prone to bias.

An overview of measured outcomes was not conducted in previous reviews of DiGA approval studies7,8. Thus, no comparison of findings is possible. A discussion of the effects reported by approval studies in comparison to previous research is also impossible; first, due to the wide range of outcomes examined by approval studies, and second, because of the novelty and international singularity of DiGA. The comparability with effects found for other mobile health applications is questionable.

In assessing the RoB, our systematic review added to the literature by evaluating several approval studies that had not been included in former reviews. The overall RoB assessment we present is consistent with two former systematic reviews that examined several DiGA approval studies published before 20227,8. Both reviews also found a high overall RoB across all examined DiGA approval studies, with a varying RoB across different domains. Comparing our findings on underlying methodological issues in the additional studies with the findings of these two previous studies, it can be concluded that the RoB in newer approval studies does not systematically differ from that of earlier studies. Neither the domain-specific RoB assessments nor the overall RoB ratings showed systematic improvement over time. The lack of systematic improvement over time may in part be explained by the fact that the methodological requirements outlined in the DiGA fast-track guidelines have remained largely unchanged since their introduction.

Although not specifically required by DiGA guidelines2, all approval studies were conducted using a (cluster-)randomized controlled trial design, considered the gold standard for clinical evidence.

Across approval studies, there were substantial differences in sample sizes and drop-out rates, with very small and very large examples of each. This variation may affect the interpretability and comparability of the study results. However, as emphasized in the DiGA fast-track guidelines, both sample sizes and drop-out rates should only be evaluated in comparison with similar interventions2. Thus, a small sample size or a high drop-out rate is not necessarily unfavorable. In several approval studies, the observed drop-out rates were higher in the intervention than in the control group. Assessing the causes and implications of this phenomenon is more difficult and does not allow for definitive conclusions.

Possible explanations for drop-outs in the intervention group might be that the app does not meet expectations, for example, with regard to an observed positive healthcare effect, or might not align with participants´ daily routines. However, the literature suggests that different factors are underlying high drop-out rates in digital interventions. A meta-analysis by Torous et al.52 dealing with drop-out rates in clinical trials of smartphone apps for depressive symptoms found no significant differences in drop-out rates between intervention and waitlist control groups. Torous et al. identify in-app mood monitoring and the provision of human feedback as key factors in reducing attrition rates, while features of intervention design, such as including waitlist or placebo controls, clinical vs. non-clinical populations, and therapeutic approaches to depression had no effect on drop-out rates.

Beyond clinical trials, high drop-out rates in the intervention group also indicate a challenge to translate DiGA into real-world healthcare settings, particularly regarding adherence and utilization following prescription. Reasons for low adherence may include insufficient user support, lack of individualization, usability issues, and poor integration into routine care53,54. Ultimately, low utilization may negatively impact the overall effectiveness of DiGAs.

Some interventions had a relatively short duration of three months or less, and in some cases, no follow-up assessment was conducted. This is in line with the DiGA fast-track guidelines from the BfArM; however, such a short intervention duration and a lack of follow-up are questionable study design elements. In practice, DiGAs are often prescribed multiple times or for a longer duration, as they mostly address chronic diseases. Thus, it may be appropriate to include obligatory analysis of long-term positive healthcare effects of DiGAs as part of the approval process.

Overall, the outlined divergence of approval studies is likely the result of the far-from-strict BfArM DiGA fast-track guidelines, which leave manufacturers a large scope for study design, especially when compared to more strictly regulated processes such as the AMNOG procedure. This leads to low comparability both between DiGA approval studies in general and between DiGAs designed for the same indication.

The reporting of outcomes and the outcome measurement instruments applied by approval studies revealed further marked differences. This is not only attributable to the variety of indications addressed, but also to the fact that approval studies employed different outcomes and measurement instruments even for similar indications. Finally, the diversity of outcomes and measurement instruments makes it difficult to compare approval studies and their reported positive healthcare effects. One way to enhance comparability would be to mandate the use of core outcome sets (COS)55 in approval studies. For example, for the indications depression and anxiety, a COS is available56, which comprises four general treatment outcomes: symptom burden, functioning, disease progression, and treatment sustainability, as well as potential side effects of treatments. This COS could have been applied in the approval studies of DiGA for depression and/or anxiety. However, specific COS for digital interventions have yet to be developed and validated.

Registers might also play a valuable role in enabling a comparable, cross-DiGA measurement of predefined outcomes- ideally based on standardized outcome sets. A recent publication by Albrecht et al.57 illustrates this approach by combining overarching and indication-specific outcomes within the DiGAReal registry for rheumatology patients.

As outlined above, the overall RoB for all approval studies was assessed as high, with different underlying causes. One cause was the suboptimal reporting quality of the included studies. Missing information or imprecise wording sometimes means that a RoB cannot be ruled out; for example, if it is not clear whether an allocation sequence in the randomization process was concealed or not, the possibility that it was not must be considered. Related to this, study protocols were often not publicly available, meaning it was not possible to assess whether data analyses were carried out according to a pre-specified analysis plan. A second cause of poor RoB ratings was the use of inadequate statistical models to deal with missing data due to drop-outs, reflected in the high RoB scores for missing outcome data. Another cause of high RoB was the frequent use of PROMs, such as those assessing improvements in quality of life, combined with the absence of blinding of outcome assessors.

While poor RoB ratings due to suboptimal reporting quality, missing study protocols, and inadequate statistical analyses can be easily avoided by better adherence to the CONSORT reporting guidelines—as already suggested in the DiGA fast track guidelines—or through the publication of study protocols, the use of PROMs is a more challenging issue.

The use of PROMs to assess DiGA outcomes is an obvious and necessary choice, as the intended effects of DiGA—such as improved quality of life—are often difficult or impossible to measure objectively. Additionally, DiGA interventions are conducted outside of clinical settings and are predominantly self-guided. Consequently, employing a waitlist study design seems reasonable, as the use of “sham” apps for control groups would be difficult to implement and likely methodologically inadequate7. Thus, overcoming the bias arising from participants’ awareness of their treatment allocation remains challenging. Nevertheless, it may be worth discussing whether the RoB2 tool´s classification of PROM usage—an essential part of patient-centered care- as a potential source of bias is always justified or appropriate.

These findings must be interpreted in light of certain strengths and limitations of the present review.

Our review provides an overview of study characteristics, measurements, outcomes, and the effects reported by DiGA approval studies, as well as an assessment of their RoB. Unlike previous reviews7,8, it also processes the reported effects, representing significant added value.

The insights gained from this review are valuable for both scientific and practical purposes. For the scientific audience, a comprehensive overview of the positive healthcare effects of DiGA and the associated RoB in the supporting evidence fills a knowledge gap. In practice, this information can offer context and guidance for DiGA prescribers. Also, by highlighting methodological gaps and inconsistencies, it establishes a framework to aid manufacturers and stakeholders in improving the design of future approval studies and the DiGA approval process, particularly a refinement of the fast-track guidelines.

This review also has limitations. We only included DiGA approval studies that demonstrated a positive healthcare effect on the basis of which DiGA received permanent approval. Studies that failed to demonstrate such an effect and as a result a DiGA did not receive permanent approval could not be considered, as these studies are not publicly available for further analysis. The BfArM provides only very limited information on the reasons why permanent approval was not granted to provisionally approved and withdrawn DiGA (see introduction). Moreover, it is not known how many applications for direct permanent approval were rejected by the BfArM.

Identifying all relevant approval studies was challenging. Although the search strategy was adjusted to address this issue—by incorporating various search methods, such as the DiGA directory, websites, MEDLINE, and a manual search via Google Scholar—it is possible that not all published approval studies for the 33 DiGAs permanently approved as of March 15, 2024, were identified and included in this review. Retrieval of DiGA approval studies could be facilitated by requiring manufacturers to provide a link to the relevant study in the DiGA directory. Regarding data extraction, it must be noted that not all required information could be extracted from the studies due to the sometimes poor reporting quality. Ambiguities could potentially have been resolved through communication with the study authors; however, this is not in line with the principles of the RoB2.

However, the RoB 2 guidelines recommend contacting study authors to request study protocols that are not publicly accessible58. Yet, in the DiGA approval process, the publication of approval studies and study registration is mandatory. It would therefore be consistent and appropriate if corresponding study protocols were also published as part of this regulatory transparency. Consequently, we refrained from contacting study authors to request unpublished protocols. In cases where no protocol was publicly available, we documented this in the RoB assessment and the RoB rating in the section was “no information”. This applied to 11 included DiGA studies. To enhance the clarity of evidence for the positive healthcare effects of DiGAs, it could be beneficial for the BfArM to mandate adherence to reporting guidelines for future approval studies.

Furthermore, the categorization of primary and secondary outcomes—as either “medical benefit” or “patient-relevant improvement of structures and processes”—had to be conducted to the best of our knowledge, due to the lack of clear definitions in the DiGA fast-track guidelines.

In several studies, the statistical significance or effect sizes were not clearly specified for reported outcomes. Consequently, data extraction required the interpretation of the available information to the best of our knowledge. Effect sizes were reported in Table 2 as indicated by the authors of the approval studies. This is because the studies used a wide range of outcomes and a variety of measurement instruments. Independent interpretation and contextualization of the effect sizes would require detailed knowledge of all these instruments and related literature, which was not feasible within the scope of this review.

The quality assessment was conducted across outcomes, taking into consideration the diversity of outcomes in the studies and the lack of comparability between them. Further analyses, such as meta-analysis, could not be conducted due to the diversity of outcomes and conditions applied in the approval studies. Thus, we were limited to a narrative presentation of the results.

The findings of our systematic review have several implications for practice and future research. The RoB assessment, in particular, clearly suggests that the evidence presented for the positive healthcare effects of DiGAs in approval studies should be considered with caution. However, a review of the DiGA fast-track guidelines reveals that the included studies do indeed fulfill the criteria for permanent approval, the granting of which is a discretionary decision made by the BfArM.

Making the permanent approval of DiGAs a discretionary decision may be considered reasonable at first glance, taking into account the diversity of DiGA interventions. However, given the divergence between scientific standards for high-quality studies and the approval criteria for DiGAs established by BfArM guidelines, a discussion on how to narrow this gap is imperative.

As the BfArM continues to grant permanent approval for DiGAs, improving the methodological quality of approval studies is important. Improving the methodological quality of DiGA studies would help to ensure trust in and the quality of DiGAs, which is crucial given the planned approval of certified DiGAs as medical devices of risk class II.

Aiming to improve the methodological quality of DiGA approval studies, the results of this systematic review imply several possible measures that could be adopted by the BfArM and manufacturers.

First, adaptations to the criteria for permanent approval of DiGA could be key to improving the quality of manufacturers’ studies. As methodological problems of early approval studies do not differ from those of later ones, a connection to the fast-track guidelines appears evident.

Thus, to address poor reporting quality, the prospective publication of study protocols and reporting according to CONSORT guidelines should be mandatory. To improve comparability between approval studies and the effectiveness of DiGAs, the BfArM should demand clear rationales for outcome selection and recommend adherence to COS. Also, a minimum intervention duration and the conduct of a follow-up should be recommended, as DiGAs often address chronic diseases and are thus prescribed multiple times and for more than 90 days at a time.

To address the RoB resulting from the inadequate use of statistical models for dealing with missing data, e.g., last observation carried forward (LOCF), the BfArM could provide details of recommended models in its guidelines. These criteria should be communicated transparently to DiGA manufacturers via the DiGA fast-track guidelines to ensure clarity and consistency in expectations. The consequences of inadequate reporting quality and methodological flaws should also be clearly communicated.

Second, incorporating real-world evidence as a mandatory component of the approval process could help strengthen the evidence base by enabling the monitoring of DiGA performance. The introduction of an application-accompanying performance measurement (in German “anwendungsbegleitende Erfolgsmessung”, AbEM) for DiGA in Germany from 202659 might be a valuable step in this direction.

Third, the BfArM should increase the transparency of the approval process. As permanent approval of a DiGA is a discretionary decision by the BfArM, the criteria used to evaluate the quality of DiGA approval studies must be publicly available. It would also be reasonable for the BfArM to apply standardized instruments, such as the RoB tool, for evaluating approval studies as part of its decision-making process. To further enhance transparency, the BfArM should also publish more detailed information on rejected applications for permanent approval and the rationales behind these decisions. In doing so, the BfArM could enhance trust in its decisions and provide an example of best practice for manufacturers designing future DiGA approval studies. Relatedly, the BfArM should also be transparent about how it handles violations of, or exceptions to, the 12-month publication deadline for approval studies of permanently approved DiGAs. According to our research, as of June 02, 2025, the 12-month deadline had expired for five permanently approved DiGAs. Clear communication on whether and how such delays affect the approval status would strengthen accountability and provide important guidance for manufacturers and international stakeholders alike.

Beyond the recommendations for improving the approval process in Germany, this systematic review offers important insights and valuable lessons for the international context. As Germany has been a pioneer in establishing reimbursable digital health applications, other countries, including France, Belgium, and Austria have already modeled their processes after the German fast-track procedure60. Other countries may follow in the future. Therefore, an important takeaway from this review is the importance of the early implementation of adequate mechanisms in the approval process to systematically identify methodological weaknesses in approval studies for DiGAs. Ensuring transparency around these mechanisms could enhance international comparability and foster the adoption of best practices globally.

Taken together, the findings of this systematic review highlight that DiGA approval studies exhibit potential for methodological improvement and should be closely monitored. In an update of this systematic review, we will review approval studies published after March 15, 2024. While the focus of the present review was on outcomes measured post-intervention, the focus of the next review will be on follow-up measurements and evidence for the long-term positive healthcare effects of DiGAs.

link